The Essential Python Libraries for Data Science

Oct 29, 2019 18:09 · 920 words · 5 minute read

Photo by Johnson Wang on Unsplash

Photo by Johnson Wang on Unsplash

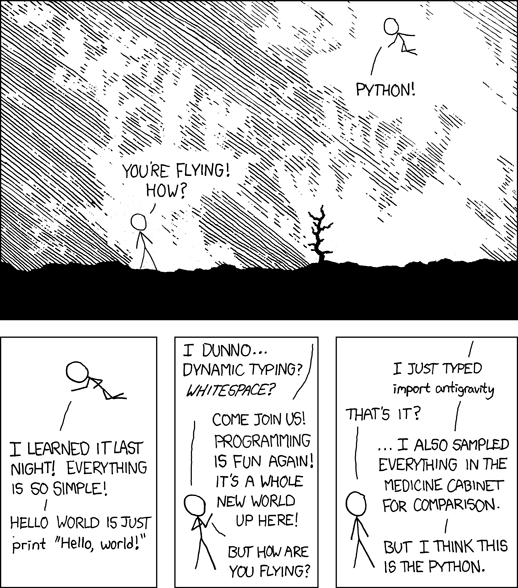

You’ve been learning about data science and want to get rocking immediately on solving some problems. So, of course, you turned to Python

Source: https://xkcd.com/353/

Source: https://xkcd.com/353/

This article will introduce you to the essential data science libraries so you can start flying today.

The Core

Python has three core data science libraries upon which many others have been built.

- Numpy

- Scipy

- Matplotlib

For simplicity, you can think of Numpy as your go-to for arrays. Numpy arrays are different from standard Python lists in many ways, but a few to remember are they are faster, take up less space, and have more functionality. It is important to note, though, that these arrays are of a fixed size and type, which you define at creation. No infinitely appending new values like you might with a list.

Scipy is built on top of Numpy and provides many of the optimization, statistics, and linear algebra functions you will need. While Numpy sometimes has similar functionality, I tend to prefer Scipy’s functionality. Want to calculate a correlation coefficient or create some normally distributed data? Scipy is the library for you.

Matplotlib is probably not winning any beauty awards, but it is the core library for plotting in Python. It has a ton of functionality and allows you to have significant control as well when needed.

2nd Generation

The core libraries are amazing and you will find yourself using them a lot. There are three 2nd generation libraries, though, which have significantly built on top of the core to give you more functionality with less code.

If you have been learning about data science and have not heard of Scikit-learn, then I’m not sure what to say. It is the library for machine learning in Python. It has incredible community support, amazing documentation, and a very easy to use and consistent API. The library focuses on “core” machine learning — regression, classification, and clustering on structured data. It is not the library you want for other things such as deep learning or bayesian machine learning.

Pandas was created to make data analysis easier in Python. Pandas makes it very easy to load structured data, calculate statistics on it, and slice and dice the data in whichever way you want. It is an indispensable tool during the data exploration and analysis phase, but I would not recommend using it in production because it generally does not scale very well to large datasets. You can get significant speed boosts in production by converting your Pandas code to raw Numpy.

While Matplotlib is not the prettiest out of the box, Seaborn makes it easy to create beautiful visualizations. It is built upon Matplotlib, so you can still use Matplotlib functionality to augment or edit Seaborn charts. It also makes it a lot easier to create more complex chart types. Just check out the gallery for some inspiration:

Example gallery - seaborn 0.9.0 documentation

_Edit description_seaborn.pydata.org

Source: https://phys.org/news/2019-08-all-optical-neural-network-deep.html

Source: https://phys.org/news/2019-08-all-optical-neural-network-deep.html

Deep Learning

With the incredible rise of deep learning, it would be wrong not to highlight the best Python packages in this area.

I am a huge fan of Pytorch. If you want to get started with deep learning while learning a library that makes it relatively easy to implement state-of-the-art deep learning algorithms, look no further than Pytorch. It is becoming the standard deep learning library for research and implementing a lot of functionality to make it more robust for production use cases. They provide a lot of great tutorials to get you started as well.

In my opinion, Keras was the first library to make deep learning truly accessible. You can implement and train a deep learning model in 10s of lines of code, which are very easy to read and understand. The downside of Keras is that the high-level abstraction can make it hard to implement newer research that is not currently supported (though they are improving in this area). It also supports multiple backends. Namely, Tensorflow and CNTK.

Tensorflow was built by Google and has the most support for putting deep learning into production. The original Tensorflow was pretty clunky in my opinion, but they have learned a lot, and TensorFlow 2.0 makes it a lot more accessible. While Pytorch is moving towards more production support, Tensorflow seems to be moving towards more usability.

Statistics

I would like to end with two great statistical modeling libraries in Python.

If you are coming over from R, you will probably be confused about why scikit-learn doesn’t give you p-values for your regression coefficients. If so, you need to look at statsmodels. This library, in my opinion, has the best support for statistical models and tests and even supports a lot of syntax from R.

Probabilistic programming and modeling is a ton of fun. If you are not familiar with this area, I would check out Bayesian Methods for Hackers. And the library you will want to use is PyMC3. It makes it very intuitive to define your probabilistic models and has a lot of support for state-of-the-art methods.

Go Fly

I’ll be the first to admit that there are many other amazing libraries in Python for data science. The goal of this post, though, was to focus on the essential. Armed with Python and these amazing libraries, you will be astonished by how much you can achieve. I hope this article can be a great jumping-off point for your foray into data science and only the beginning of all the amazing libraries you will discover.