How To Use Deep Learning Even with Small Data

Nov 9, 2019 05:59 · 1077 words · 6 minute read

You’ve heard the news — deep learning is the hottest thing since sliced bread. It promises to solve your most complicated problems for the small price of an enormous amount of data. The only problem is you are not working at Google nor Facebook and data are scarce. So what are you to do? Can you still leverage the power of deep learning or are you out of luck? Let’s take a look at how you might be able to leverage deep learning even with limited data and why I think this might be one of the most exciting areas of future research.

Start Simple

Before we discuss methods for leveraging deep learning for your limited data, please step back from the neural networks and build a simple baseline. It usually doesn’t take long to experiment with a few traditional models such as a random forest. This will help you gauge any potential lift from deep learning and provide a ton of insight into the tradeoffs, for your problem, of deep learning versus other methods.

Get More Data

This might sound ridiculous, but have you actually considered whether you can gather more data? I’m amazed by how often I suggest this to companies, and they look at me like I am crazy. Yes — it is okay to invest time and money into gathering more data. In fact, this can often be your best option. For example, maybe you are trying to classify rare bird species and have very limited data. You will almost certainly have an easier time solving this problem by just labeling more data. Not sure how much data you need to gather? Try plotting learning curves as you add additional data and look at the change in model performance.

Fine-Tuning

Photo by Drew Patrick Miller on Unsplash

Photo by Drew Patrick Miller on Unsplash

Okay. Let’s assume you now have a simple baseline model and that gathering more data is either not possible or too expensive. The most tried and true method at this point is to leverage pre-trained models and then fine-tune them for your problem.

The basic idea with fine-tuning is to take a very large data set which is hopefully somewhat similar to your domain, train a neural network, and then fine-tune this pre-trained network with your smaller dataset. You can learn more in this article.

For image classification problems, the go-to dataset is ImageNet. This dataset contains millions of images across many classes of objects and thus can be useful for many types of image problems. It even includes animals and thus might be helpful for rare bird classification.

To get started with some code for fine-tuning, check out Pytorch’s great tutorial.

Data Augmentation

If you can’t get more data and you don’t have any success with fine-tuning on large datasets, data augmentation is usually your next best bet. It can also be used in conjunction with fine-tuning.

The idea behind data augmentation is simple: change the inputs in such a way that provides new data while not changing the label value.

For example, if you have a picture of a cat and rotate the image, it is still a picture of a cat. Thus, this would be good data augmentation. On the other hand, if you have a picture of a road and want to predict the appropriate steering angle (self-driving cars), rotating the image changes the appropriate steering angle. This would not be okay unless you also adjust the steering angle appropriately.

Data augmentation is most common for image classification problems and you can find techniques here.

You can often think of creative ways to augment data in other domains such as NLP (see some examples here) and people are also experimenting with GANs to generate new data. If the GAN approach is of interest, I would take a look at DADA: Deep Adversarial Data Augmentation.

Cosine Loss

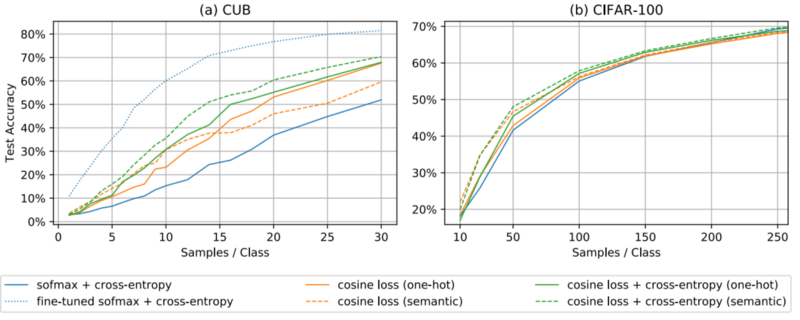

A recent paper, Deep Learning on Small Datasets without Pre-Training using Cosine Loss, found a 30% increase in accuracy for small datasets when switching the loss function from categorical cross-entropy loss to a cosine loss for classification problems. Cosine loss is simply 1 — cosine similarity.

You can see in the above graph how the performance changes based on the number of samples per class and how fine-tuning can be very valuable for some small datasets (CUB) and not as valuable for others (CIFAR-100).

Go Deep

In a NIPs paper, Modern Neural Networks Generalize on Small Data Sets, they view deep neural networks as ensembles. Specifically, “Rather than each layer presenting an ever-increasing hierarchy of features, it is plausible that the final layers offer an ensemble mechanism.”

My take-away from this is for small data, make sure you build your networks deep to take advantage of this ensemble effect.

Autoencoders

There has been some success using stacked autoencoders to pre-train a network with more optimal starting weights. This can allow you to avoid local optimums and other pitfalls of bad initialization. Though, Andrej Karpathy recommends not getting over-excited about unsupervised pre-training.

If you need to brush up on autoencoders you can review this Stanford deep learning tutorial. The basic idea is to build a neural network that predicts the inputs.

Prior Knowledge

Photo by Glen Noble on Unsplash

Photo by Glen Noble on Unsplash

Last, but not least, try and find ways to incorporate domain-specific knowledge to guide the learning process. For example, in Human-level concept learning through probabilistic program induction, the authors construct a model that builds concepts from parts by leveraging prior knowledge in the process. This led to human-level performance and out-performed the deep learning approaches at that time.

You can also use domain knowledge to limit the inputs to the network to reduce dimensionality or adjust the network architecture to be smaller.

I bring this up as the final option because incorporating prior knowledge can be challenging and is generally the most time-consuming of the options.

Making Small Cool Again

Hopefully, this article has given you some thoughts on how to leverage deep learning techniques even with limited data. I personally find that this is a problem that is currently not discussed as much as it should be, but that has very exciting implications.

There are a vast number of problems that have very limited data and for which acquiring more data is very expensive or impossible. For example, detecting rare diseases or educational outcomes. Finding ways to apply some of our best techniques, such as deep learning, to these problems is extremely exciting! Even Andrew Ng agrees: