How To Create Unique Pokémon Using GANs

Mar 28, 2020 13:45 · 902 words · 5 minute read

My son is really into Pokemon. I don’t get it, but I guess that’s besides the point. I did start to wonder, though, if I could create new Pokemon cards for him automatically using deep learning.

I ended up having a bit of success generating Pokemon-like images using Generative Adversarial Networks (GANs) and I thought others might enjoy seeing the process.

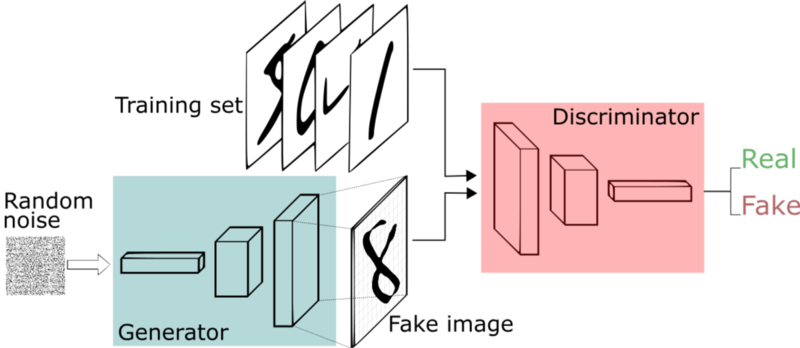

Generative Adversarial Networks

I don’t want to spend a lot of time discussing what GANs are, but the above image is a very simple explanation of the process.

You train two networks — a discriminator and a generator. The generator learns how to take in random noise and generate images which look like images from the training data. It does this by feeding its generated images to a discriminator network which is trained to discern between real and generated images.

The generator is optimized to get better and better at fooling the discriminator and the discriminator is optimized to get better and better at detecting the generated images. Thus, they both improve together.

If you would like to learn more, there are many amazing articles, books, and YouTube videos you can find via Google.

So — my hypothesis is that I could train a GAN with real Pokemon images as my training set. The result would be a generator that would then be able to create novel Pokemon! Excellent!

The Data

My first challenge was finding Pokemon images. Fortunately, Kaggle datasets came to the rescue!

Someone had already thought of a similar idea, though it sounds like he didn’t have much success generating new Pokemon images, but since he spent the time gathering the 800+ images he decided to upload them to a Kaggle dataset.

This saved me a ton of time.

I also learned that there really are only about 800 Pokemon so it wouldn’t be possible to expand this data set with additional Pokemon.

Here is an example of one of the images (256 x 256):

The Algorithm

Now that I had data, I had to pick which type of GAN I wanted to use. There are probably hundreds of variations of GANs that exist, but I have seen good results in the past using DCGAN.

DCGAN eliminates any fully connected layers from the neural networks, uses transposed convolutions for up-sampling, and replaces max pooling with convolution strides (among other things).

I like DCGANs because compared to other GANs I have tried they seem to be more robust and thus easier to train without significant tuning of hyper-parameters.

In fact, DCGAN is so popular, that PyTorch has a pretty good implementation as one of its examples. The great part, as well, is that their example can read input directly from a folder. Thus, with the following command, I was able to start training my GAN:

python main.py –dataset folder –dataroot ~/Downloads/pokemon/ –cuda –niter 10000 –workers 8

This command reads the images from the ~/Downloads/pokemon folder, runs on my GPU with 8 workers for loading the data, and runs for 10,000 iterations.

It turns out 10,000 iterations is a lot for this problem, but I wanted to see how far I could push it. Let’s take a look!

The Results

The very first step starts with a network which knows nothing, so all that is generated is noise:

Each box is a 64 x 64 pixel image and is an attempt to generate a Pokemon from our generator. Since our grid is 8 x 8, we have 64 different Pokemon trying to be generated. I scaled the images down to 64 x 64 because this algorithm becomes unstable when trying to generate larger images (or at least it did for me).

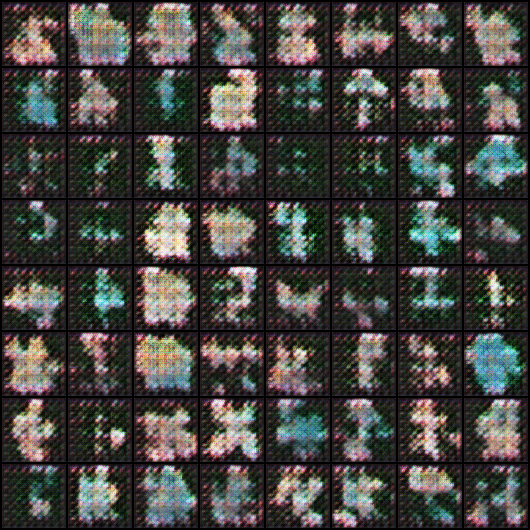

After 50 epochs, we start to see some life:

After 150, things become a bit clearer:

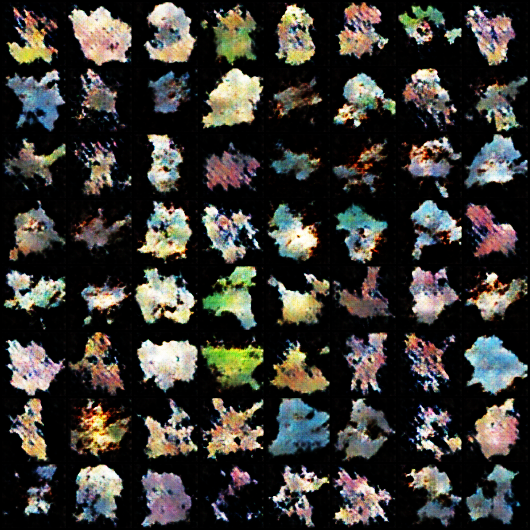

After 3,700, you have some half-way decent 64 x 64 attempts at Pokemon. After this, it started to diverge to worse results:

Now — I know what you’re thinking, those don’t look like Pokemon at all!

I would ask you to zoom your browser out to about 25% and look again. At a distance, they look surprisingly similar to real Pokemon.

The issue is since we are training on 64 x 64 images it is too easy for the discriminator to be fooled by images which are Pokemon-like in their shape and color and thus the generator doesn’t need to improve.

Next Steps

The obvious next step, in my mind, is to train a higher resolution GAN. In fact, I already made some attempts at just this.

My first attempt was to re-write the PyTorch code to scale to 256 x 256 images. The code worked, but the DCGAN broke down and I couldn’t stabilize the training. I believe the main reason for this is because I only have about 800 images. And while I do some data augmentation, it isn’t enough to train a higher resolution DCGAN.

I then tried using a Relativistic GAN which has seen success training on higher resolution data with small data sets, but wasn’t able to get that to work either.

I’m going to continue to try some other ideas to generate higher resolution Pokemon and if I get something to work, I will post the technique I used.

If nothing else, maybe we can call these generated Pokemon Smoke type Pokemon?

Interested in learning more about Python data analysis and visualization? Check out my course.